2.14.19 – SIW – TIMOTHY J. PASTORE, ESQ.

Companies deploying these technologies must use human intelligence to ensure artificial intelligence does not present unnecessary liability.

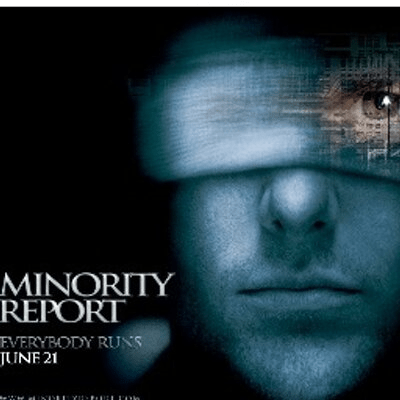

The movie “Minority Report” takes place in Washington, D.C., in the year 2054, where a special police unit called PreCrime relies on the pre-visualizations of three mutated humans – called “Precogs” – to predict murders before they happen. The Precogs (or at least two of the three of them) visualize a murder by Captain John Anderton (played by Tom Cruise), who then flees from his own colleagues to try to recover the “minority report” of the third Precog to prove his innocence.

Knowing that there is a citywide optical recognition system which will identify his every move, Anderton goes to a black market doctor for a risky eye transplant to fool the system and avoid being tracked. I will offer no further spoilers here, except to say the optical biometric technology is pretty cool…and the eye transplant is very disturbing.

While predicting murder before it happens may be science fiction, the use of artificial intelligence in security systems, like optical or facial recognition, is very real. Among other examples, the U.S. Secret Service announced in Nov. 2018 that it will operate a facial recognition pilot at the White House to biometrically confirm the identity of certain volunteers around the complex. Ultimately, the goal is to determine if facial recognition technologies can be of assistance in identifying known trouble-makers prior to direct engagement with law enforcement.

One of the areas selected for this pilot program is in an open setting, where individuals are free to approach from any angle, and where environmental factors – lighting, distance, shadows, physical obstructions, etc. – will vary. The second area is more controlled, evenly lighted, and free from obstructions. For both locations, the technology can capture facial images out to approximately 20 yards, which are cross-checked against an existing electronic database to determine if there is a match of interest to the Secret Service.

The Legal Implications

I am not a Precog, so I cannot predict with certainty what might happen in the future; however, I can predict that artificial intelligence will continue to be incorporated into various security applications – facial recognition among them.

Whether this type of technology is deployed by the Secret Service at the White House or by private or government actors at other locations, there are various legal implications presented by this biometric technology – most notably, privacy.

This is because a facial image – particularly when captured, analyzed and stored – is considered personally identifiable information and, therefore, subject to applicable privacy laws. When deploying these systems for clients, be sure they are taking these steps to limit legal liability:

1. Be transparent. Since individual members of the public may be unaware that their facial images are being captured, processed algorithmically and compared to existing images in a pre-determined gallery/database, notice of the surveillance should be sent through public communications channels (as the Secret Service did with its project). Alternatively, notice can be posted in the physical location where the surveillance is to occur. This affords the public some opportunity to “opt-out” of the surveillance.

2. Only use what is needed. Only personally identifiable information (such as a facial image) that is directly relevant and necessary to accomplish the specified purpose(s) should be collected and archived only for as long as is necessary to fulfill the specified purpose(s). Further, images determined not to be a match to images in the comparison image gallery can and should be immediately and automatically deleted from the database.

3. Secure the data. The data collected should be stored in a standalone server dedicated to the surveillance operation and not made available for routine use in other operations. Only a limited set of personnel should have access to the data, and those with access to the data should be trained on appropriate privacy practices so that the data can be used only as intended.

4. Use human intervention. Yes, there is a still a role for humans to play! Facial images received from the video stream may incorrectly show as a match, resulting in personally identifiable information being retained unnecessarily. Since there is always a risk of false-positive matches, operators can mitigate this risk by limiting the underlying database and confirming matches with human – not artificial – intelligence.

Timothy J. Pastore, Esq., is a Partner in the New York law firm of Duval & Stachenfeld LLP (www.dsllp.com), where he is the Chair of the Security Systems and Real Estate Cybersecurity Practice Groups. Before entering private practice, Mr. Pastore was an officer and Judge Advocate General (JAG) in the U.S. Air Force and a Special Assistant U.S. Attorney with the U.S. Department of Justice. Reach him at (212) 692-5982 or by e-mail at tpastore@dsllp.com.